In the on-premise setting I'm able to upload files and read them using methods in SQL Server like xp_DirTree for reading a directory, and openrowset / bulk insert reading the contents of the file. In an Azure environment this isn't possible.

Lets say the steps for the end user are currently as following:

- The user starts a task where it can upload a file using a 'File upload' control. This upload is bound to an Azure File Storage location, so it'll upload immediately.

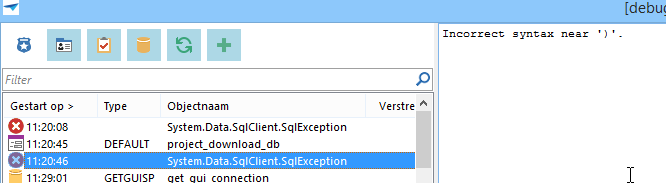

- The user executes the task: The sql procedure of the task is called to process the uploaded file. In example the user uploads a .txt file with some data to be parsed. The result of this action is in example a table record which is added.

Another task an user can do in the product, is generate files (export) and save them on a storage location. In the on-premise situation this would be a network drive. In the cloud environment you don't have a network drive. But I think that could / should be replaced by a Azure File Storage. Because the Azure File Storage can be mounted by the end user as a regular network drive, so they (or another piece of software) could process the generated files.

Same question here: Is it possible to create a file via a SQL stored procedure and store it on a Azure File Storage? Or is something built-in with a process flow possible, saving the results of a stored procedure into a file.

Please note we're using the web client here, as with the new universal client it probably will be web-only.